AI is the study and design of computers and computer systems that can perform tasks that typically require human intelligence, such as visual perception, speech recognition, decision-making, and translation between languages.

Artificial intelligence is an area of computer science that studies how to create machines and programs that behave similarly to the human brain. AI models include machine learning, deep learning, and natural language processing.

Artificial intelligence has been the subject of many science fiction books and movies, but many of the most dramatic possibilities remain remote technological prospects.

However, AI has been around for a long time, and it’s already a big part of our everyday lives. And the best part is, we are only at the beginning stages of its rise to power.

The truth is that AI will most likely play a significant role in our lives for many years to come. But the way it will do so is entirely up to us.

In this article, I will give you a very brief introduction to what artificial intelligence is and how it works.

So if you want to find out more about this topic and how it could affect your life in the future, then read on..

What is Artificial Intelligence?

Artificial intelligence is the branch of computer science that focuses on building an intelligent machine capable of performing tasks typically requiring human intelligence.

Artificial intelligence attempts to replicate intelligent behavior in machines, and AI algorithms are used to solve a wide range of practical problems.

The purpose of AI is to build a machine with human traits and intelligence that surpasses human capabilities. The difference between human intelligence and the intelligence of a machine is that the machine is not limited by biological needs, limitations, or the physical constraints of a human.

As a result, there is increasing interest in this field, both from individuals and organizations.

How does AI work?

AI works and learns by analyzing data in the form of inputs. It looks for relationships and patterns in the data and uses them to generate outputs, make decisions, or even predict future occurrences.

There are three basic processes within AI.

The learning process involves analyzing data, building models or learning algorithms to make sense of it, and using the data to make predictions. Data can come from multiple sources like sensors, cameras, databases, and more. Learning algorithms will look for patterns in the data and determine if there is any relevance.

The reasoning process involves choosing a suitable algorithm to help make the best decision based on the data. There are different types of algorithms available to choose from. Each algorithm has its pros and cons and is designed to address specific challenges.

The self-correction process involves the fine-tuning or optimization of the model to ensure accurate outputs.

What are the different types of artificial intelligence?

AI is divided into four specific categories.

These are:

- Reactive Machines

- Limited Memory

- Theory of Mind

- Self-Awareness

Reactive machines and Limited memory AI are classified as Narrow AI. In contrast, Theory of mind and self-awareness AI are classified as strong AI.

Reactive Machines

Reactive Machines are the simplest type of AI. It doesn’t have a memory, so it doesn’t store data and has limited capability.

A reactive machine will respond to input and provide output from it. For example, if you ask a reactive machine to print “hello,” it will only be able to do it once, and then it’s done. There will be no ability for the machine to recall what happened before or learn and improve upon itself.

Think of reactive machines as emulating a human being’s ability to respond.

This is the simplest type of AI. It’s also what IBM’s Deep Blue was based on.

Limited Memory AI

Limited Memory AI is the next level up from reactive machines. It can store data in its memory and use the incoming data and pre-programmed knowledge to make predictions.

It can also continually improve by analyzing data over time, learning from it, and making decisions based on that information. This is similar to the way humans learn from past experiences.

There are three types of limited memory.

- Long Short Term Memory (LSTMs)

- Evolutionary Generative Adversarial Networks (E-GAN)

- Reinforcement learning

Limited memory AI is used in all modern-day AI systems. Think of chatbots and virtual assistants like Siri, Alexa, and Cortana. They are all limited memory AI.

Theory of Mind

AI Theory of Mind is still in its beginning stages and most probably a long way off from being realized. It is the ability for a machine to understand what other people think and feel and then use that understanding of emotional behavior to react.

Self-Awareness

This is the final type of AI and the most complex of all.

It’s more than just a simple machine that can learn; it’s a sentient being that can understand its own thoughts and emotions.

This is the ultimate goal of AI and will enable it to make its own decisions and take control of its own actions. It uses logic and reasoning to make decisions and takes into account the consequences of those decisions. Self-aware AI interacts with other AIs and humans, and it’s capable of learning from its own mistakes and improving.

Self-Awareness AI outperforms humans in all aspects.

Although it’s still very far away, it will probably never be seen in our lifetime. And depending on how you look at it, this might be a good thing considering it could advance society or eradicate humanity.

Alternate AI classification

There is an alternate classification of AI that’s used more in science and tech circles.

Artificial Narrow Intelligence

(ANI)- All currently existing AI (reactive machines and limited memory) are artificial narrow intelligence.

- While artificial narrow intelligence appears highly sophisticated, it only does what it’s programmed to do. It cannot take the knowledge gained and apply it to another task.

- Artificial narrow intelligence is therefore considered weak AI.

Artificial General Intelligence

(AGI)- AGI can learn, reason, plan, communicate and adapt.

- If AGI were developed, it would probably make human labor obsolete as it can potentially do everything a human can do, just better due to its computational power.

- Artificial general intelligence doesn’t exist yet. However, there are dozens of big organizations currently researching it.

Artificial Superintelligence

(ASI)- Artificial Intelligence capable of performing abstractions, interpretations, and other human-like cognitive processes at exponentially higher speeds and efficiencies than the human brain is can.

- A step beyond human-level machine intelligence. As such, it is considered to be the pinnacle of AI Research.

- The point at which AI eclipses human intelligence.

Now let’s look at some of the essential terms in artificial intelligence.

Machine Learning

“the field of study that gives computers the ability to learn without explicitly being programmed.” – Arthur Samuel (AI pioneer)

Machine learning (ML) refers to a field of artificial intelligence that uses statistical techniques to “teach” computers how to perform tasks. In other words, it focuses on making computers learn to perform tasks by analyzing data and patterns.

Machine learning methods are used to analyze data sets and make predictions based on what it has learned. This is accomplished by training the ML algorithm with example data. Training takes place over a period of time and requires a significant amount of data.

Once trained, the ML algorithm can be applied to new data without being retrained, making it useful for real-time applications.

Machine learning is a crucial element of artificial intelligence, as it provides the ability for machines to learn without being explicitly programmed. And it gradually improves over time with more data.

There are four models of machine learning techniques.

- Supervised machine learning

- Unsupervised machine learning

- Semi-supervised machine learning

- Reinforcement machine learning

Supervised machine learning is a type of machine learning where the training data set consists of samples labeled with class labels. Supervised machine learning algorithms learn to recognize patterns in the data. These algorithms take into account the class labels to make their predictions.

For example, if you want to build a computer that can detect whether a person is crying or laughing, it would be necessary to provide the algorithm with labeled images of these emotions. The trained algorithm would then be able to identify the appropriate features that differentiate between the two classes.

Both the labeled data inputs and the outputs are specified.

This means that the model learns from the training data and applies it to new data.

Unsupervised machine learning is a subset of machine learning that focuses on learning patterns from unlabeled data without any input from a human.

It’s helpful when the data is already available, but there are no labels to train it with.

In this sense, unsupervised learning is different from supervised learning, which requires labeled data to produce results.

For example, it can find similar objects by grouping them based on their features like customer segmentation.

Most neural networks, probabilistic clustering methods, and deep learning use unsupervised machine learning.

As the name suggests, semi-supervised machine learning is a middle ground between supervised and unsupervised machine learning.

In this approach, a model is trained using both labeled and unlabeled data.

It’s usually used when not enough labeled data is available to train the system. Therefore, it uses smaller labeled datasets and then applies that knowledge to larger unlabeled datasets to improve accuracy.

Reinforcement learning is a machine learning technique that allows a computer system to learn a task by trial and error, reinforcing successful outcomes.

The computer system repeatedly observes a particular action taken in response to a stimulus and decides the most likely following action based on its experiences. The system learns from its mistakes and adapts accordingly.

In reinforcement machine learning, the machine learns through trial and error. However, the algorithm is programmed on specific rules to accomplish the task.

Examples of machine learning

- Speech recognition

- Chatbots

- Online shopping recommendation engines

- Automated stock trading

Deep Learning

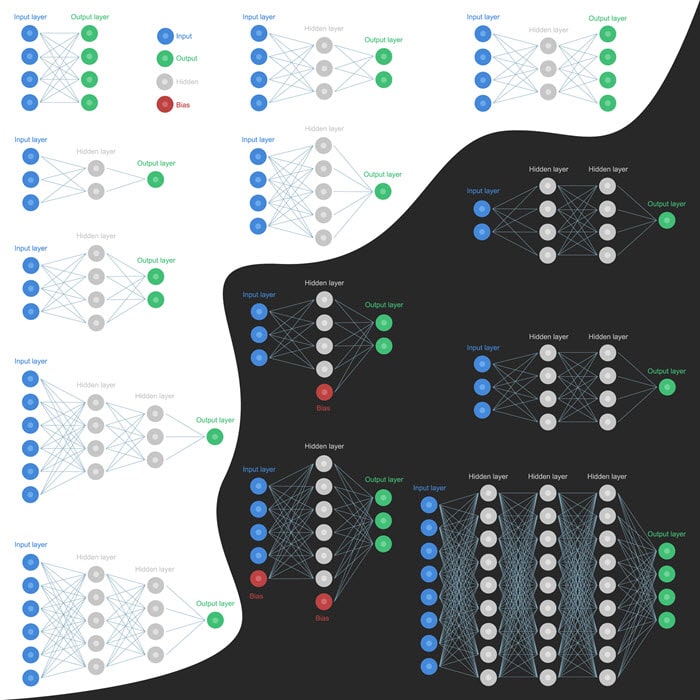

Deep learning is a subset of machine learning that uses algorithms designed to process data in a hierarchical or distributed way. This is essentially a neural network with three or more layers. These artificial neural networks attempt to simulate the behavior of the human brain—albeit far from matching its ability—allowing it to “learn” from processing large amounts of data.

While a neural network with a single layer can still make approximate predictions, additional layers can help to optimize and refine for accuracy.

Deep learning is the most successful application of machine learning in artificial intelligence. Almost all of the significant advances in artificial intelligence in recent years are due to deep learning.

It’s used in most NLP, image recognition, and speech recognition applications.

Deep learning has to combine different types of information to produce a single outcome while at the same time learning the relationship between the different types of information.

For example, in speech recognition, the outcome is predicting the word that is being said. The different types of information are the sound wave, the phonemes, the syllables, the words, and the sentence’s meaning.

Differences between machine learning and deep learning

Deep learning is a subset of machine learning. Its multi-layered artificial neural networks make it more complex than the linear structure of machine learning.

It also requires larger datasets and more resources.

Natural Language Processing

Natural language processing (NLP) refers to a branch of artificial intelligence that seeks to understand natural human language.

NLP is concerned with the representation, processing, analysis, and understanding of human language, as used in speech, text, handwriting, and other unstructured data sources.

It combines machine learning and deep learning with computational linguistics and uses syntactic analysis and semantic analysis to understand language.

Syntactic Analysis

Syntactic analysis is a technique used to determine the grammatical structure of a sentence. The structure of its constituent words determines the grammar of a sentence.

Semantic Analysis

Semantic analysis refers to the process of analyzing natural language and determining its meaning. Accomplished using techniques that recognize, identify, and classify words, phrases, and sentence structures.

For example, “What is the weather today?” could be analyzed using NLP techniques to determine the question’s semantic context, i.e., “I am asking about the weather today.”

Semantic analysis also involves the analysis of natural language for information extraction. This involves identifying entities, such as people, places, or things, and determining their relationships.

NLP has applications in various fields, including search engines, information retrieval, speech recognition, machine translation, language generation, question answering, and chatbots.

Neural Networks

Neural, or Artificial neural networks, are a subset of AI where computers are designed to act like the human brain.

They are loosely modeled on biological neural networks to replicate the way humans learn. Artificial neural networks are made up of “neurons” that communicate with each other through connections called synapses. A neuron receives information from one or more synapses, processes it, and then sends it back out to the synapse or synapses connected to it.

This allows the neural network to learn and make decisions. The number of layers and the number of nodes within each layer are determined based on how the network functions.

Examples of AI applications in our day-to-day life

- Grammar checkers

- Face recognition to unlock a phone

- Search engine algorithms

- Chatbots

- AI Writing Tools

- Netflix recommendations

- Siri

- Alexa

- Uber

- Curated content and feeds in social media

- Most video games use AI

- Modern GPS systems

What are the advantages and disadvantages of Artificial Intelligence?

AI is not new. It has been around for decades. The only thing that has changed is the level of sophistication. Artificial intelligence is changing the way we work, live, and play. AI will shape the future in ways that are unimaginable to us.

ADVANTAGES

Elimination of Human Error

One of the advantages of artificial intelligence is eliminating human error, which results in more accuracy and precision. There will be less risk of mistakes that would otherwise lead to injury or death.

Human error can be caused by fatigue, carelessness, attention lapse, unfamiliarity with the task, language barrier, and more.

Excels at repetitive tasks

AI excels at repetitive tasks because the algorithms have been designed for that specific purpose. They are made to perform a very narrow set of tasks repeatedly, and they can do so with incredible efficiency and speed.

Automation

AI automation leads to a dramatic increase in productivity because it automates most of the mundane tasks we do today.

Performs dangerous tasks instead of humans

Artificial intelligence eliminates the need for human intervention in dangerous situations such as bomb defusing and other high-risk industries. As a result, AI will make life safer.

Speed

Artificial intelligence can process large volumes of data in a fraction of the time it would take a human being.

It can also find patterns in the data to make decisions in less time. And can be used to detect, identify, diagnose, and predict problems in real-time. Tasks that can take humans months to get to without the use of AI.

Available 24/7

It also is not as limited as humans by biological needs or the physical constraints of a human.

AI can work 24/7 without sleep or rest because it’s not limited by human biological needs or physical constraints. An AI is not going to get sick or suffer from fatigue.

They will work around the clock, whether you need them to or not.

Reduces human resources

Artificial intelligence does not require human resources, so it saves money by reducing the number of employees required.

There is also no need for training and education, so it also reduces human capital costs.

No Bias

There is no bias in an AI system because it’s not influenced by human emotions.

It’s not going to be prejudiced towards race, gender, age, or political views when providing insights or making decisions.

DISADVANTAGES

High cost to develop

AI technology is expensive to develop. It requires high-powered servers, complex algorithms, massive data storage, and sophisticated software. This makes it a costly proposition.

Lack of AI Specialists

AI is a very specialized field with a lower number of professionals that can meet the high demand.

Increases Unemployment

It can result in a loss of jobs as businesses replace their workers with AI.

Effectiveness relies on quality

The effectiveness of AI capabilities depends on the quality of programming and data used to create and train it.

Final Thoughts

I hope this article has given you a better idea of what is artificial intelligence.

AI is one of the most significant driving forces that has propelled technology to new heights allowing us to achieve incredible things we would have otherwise never been able to do before.

While the road for AI can be pretty bumpy and unpredictable, it’s encouraging to know that we are getting a little more comfortable with each step, and as a result, we will learn just how far is too far.

RELATED POSTS

Auto Article Writer: The Top 3 AI-Powered Content Generators

Auto Article Writer: The Top AI-Powered Content Generators You've probably heard the term "auto-generated content" before. These are...

The 11 Best AI Article Writer Software and Writing Assistant Tools in 2022

Best AI Article Writer: 11 Top-Rated AI Software and Writing Assistant Tools for Content Creation Many article writing software and writing...

The 11 Best story writing AI of 2022

The 11 Best Story Writing AI and Novel Generating Software of 2022What will artificial intelligence and storytelling look like in 2022? There is a...